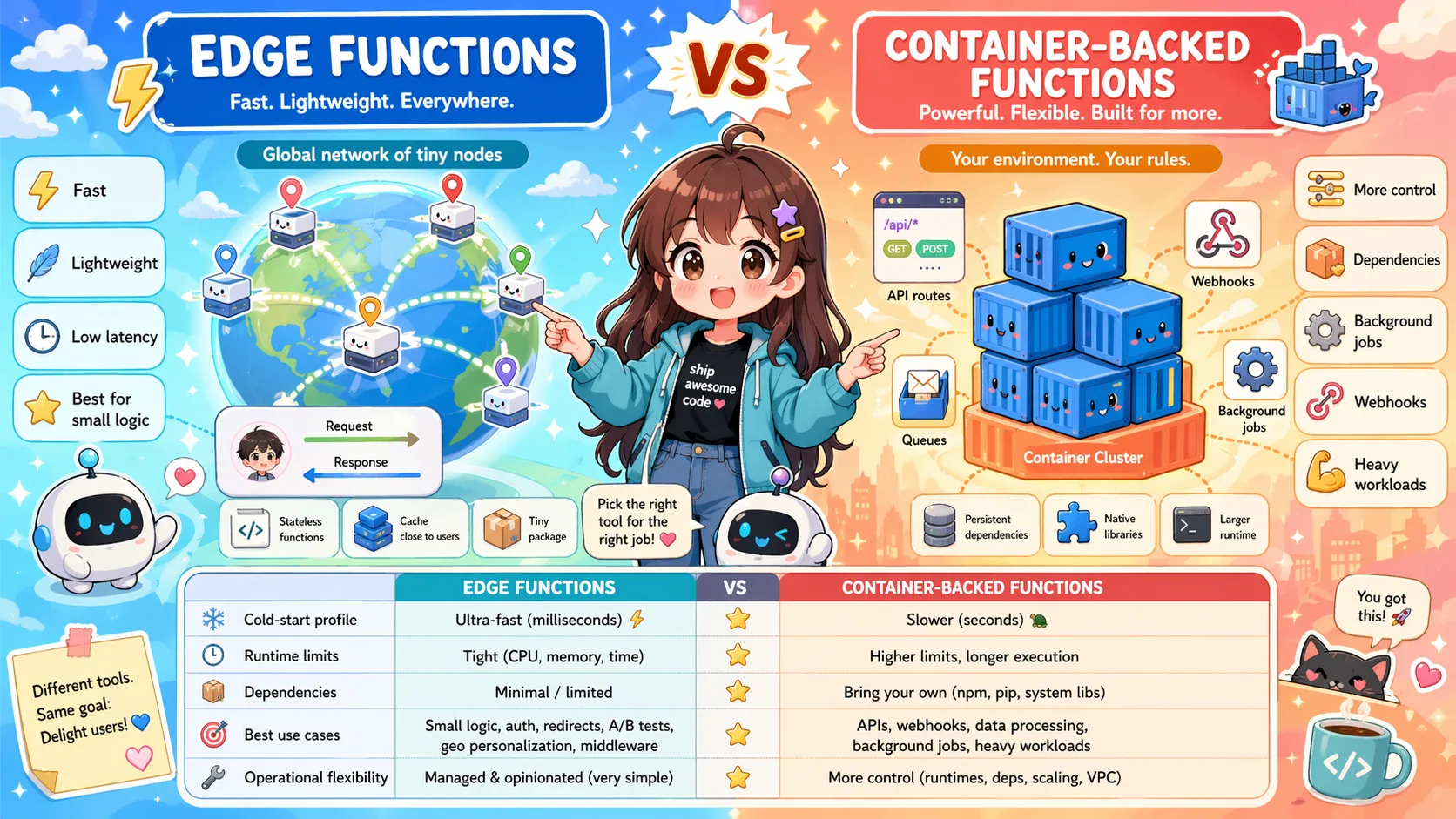

Edge Functions vs Container-Backed Functions

Edge functions and container-backed functions solve different problems. Learn when to use edge isolates and when full containers are a better backend runtime.

Edge functions and container-backed functions are both called “serverless”, but they are built for different jobs.

Edge functions are optimized for running small pieces of logic close to users. Container-backed functions are optimized for running backend code in isolated runtime environments with broader dependency support.

Neither model is universally better. The right choice depends on the workload.

What edge functions are good at

Edge functions are useful when latency near the user matters and the logic is relatively small.

Good use cases include:

- request rewriting;

- redirects;

- authentication checks;

- personalization at the edge;

- lightweight API responses;

- cache decisions;

- geo-based routing;

- small transformations.

The main advantage is placement. Running code near the user can reduce network latency.

What container-backed functions are good at

Container-backed functions are better when the workload looks more like backend application code.

Good use cases include:

- webhook processors;

- scheduled jobs;

- background jobs;

- AI agent tools;

- APIs with larger dependencies;

- native modules;

- Python or Go workloads;

- file processing;

- multi-step workflows;

- private API integrations.

The main advantage is runtime flexibility and isolation.

The dependency question

Edge runtimes often have restrictions. They may not support the same APIs, libraries, native dependencies, or runtime behavior as a full container.

That is fine for lightweight logic. It can be painful for backend workloads that depend on ordinary Node.js packages, Python libraries, system behavior, or long-running processes.

A container-backed runtime gives you an environment closer to how backend code normally runs.

The latency question

Edge functions can be faster for user-facing request handling when geography matters.

But not every backend workload is latency-sensitive in that way.

A webhook from Stripe, a nightly cron job, a background file processor, or an AI summarization job usually cares more about reliability, logs, dependencies, and execution model than being close to an end user.

For those workloads, containers may be a better trade-off.

The AI workload question

AI workloads often need:

- model SDKs;

- vector database clients;

- document parsers;

- external API clients;

- background execution;

- retries;

- longer processing time;

- structured logs;

- environment variables;

- tool endpoints.

Some AI tasks can run at the edge. Many production agent backends fit better in a container-backed runtime.

Example: edge function use case

A good edge function:

request arrives

→ check country

→ rewrite route

→ add header

→ return quickly

This is small, fast, and user-facing.

Example: container-backed function use case

A good container-backed function:

webhook arrives

→ verify signature

→ start background job

→ call external APIs

→ run LLM classification

→ store result

→ send notification

This is backend work. It needs runtime flexibility and observability more than edge placement.

Where Inquir Compute fits

Inquir Compute focuses on container-backed serverless functions for backend workloads. That makes it useful for:

- AI agent backends;

- cron jobs;

- webhook processors;

- background jobs;

- API routes;

- multi-step workflows;

- Node.js, Python, and Go functions.

It is not trying to replace edge platforms for edge-specific workloads. Instead, it gives backend automation a place to run without requiring Kubernetes.

Choose edge functions when

Use edge functions when:

- the logic is small;

- the request is user-facing;

- geographic latency matters;

- the code fits edge runtime constraints;

- caching or routing is central;

- you do not need heavy dependencies.

Choose container-backed functions when

Use container-backed functions when:

- you need a fuller runtime;

- dependencies matter;

- work may run longer;

- you need background jobs;

- you process webhooks;

- you run scheduled jobs;

- you expose AI agent tools;

- logs and execution history are important.

Can you use both?

Yes. A common architecture is:

Edge platform

→ user-facing routing and caching

Container-backed runtime

→ backend jobs, APIs, webhooks, schedules, AI tools

The two models can complement each other.

Conclusion

Edge functions and container-backed functions solve different problems.

Edge is best for small, latency-sensitive logic close to users. Containers are better for backend workloads that need dependencies, isolation, schedules, jobs, and observability.

The best architecture is not about choosing the trendiest runtime. It is about matching the runtime to the workload.