Serverless for AI Agents: What Breaks After the Prototype

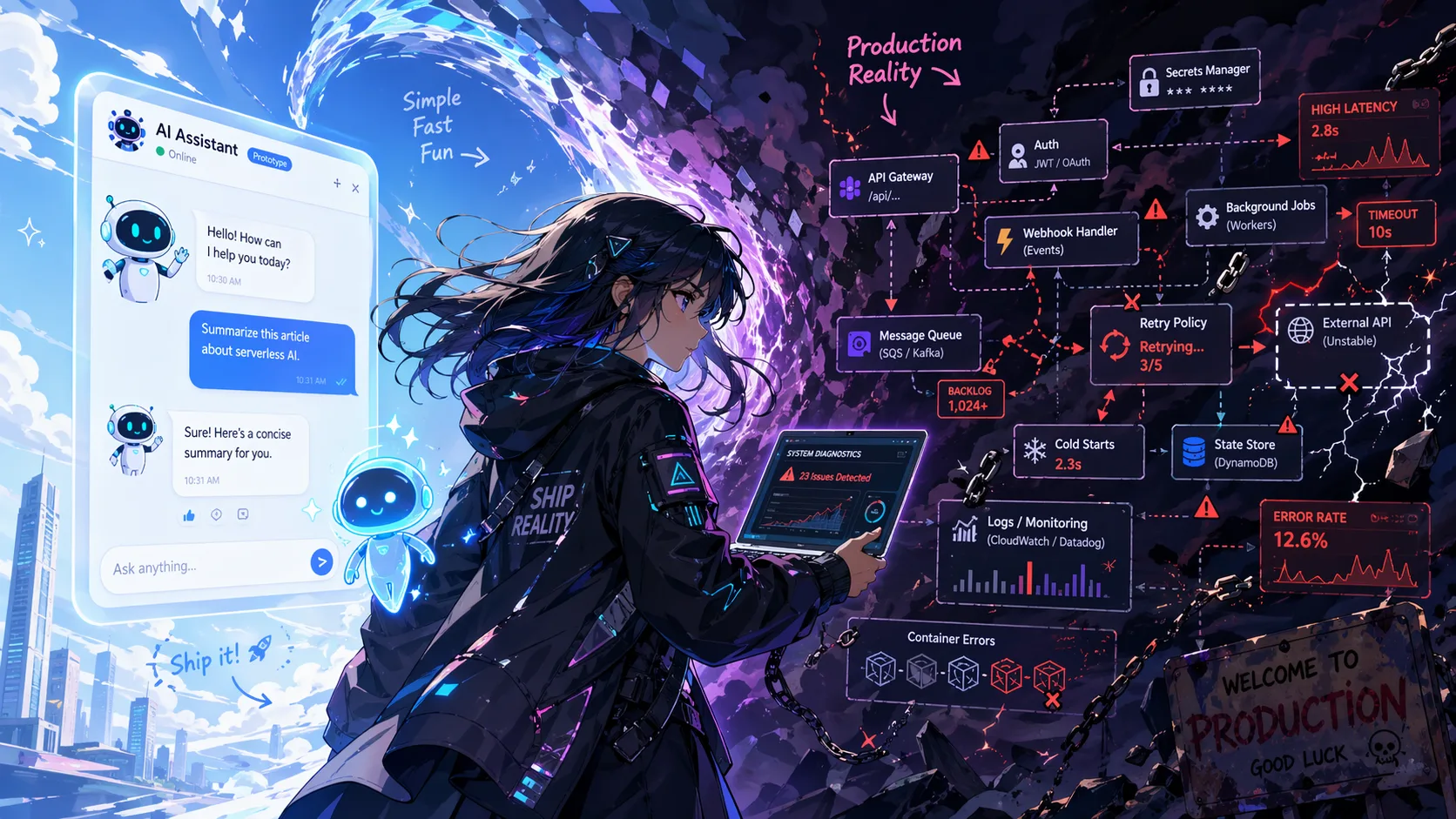

The first version of an AI agent is usually simple. You write a prompt, connect a model, add one or two tools, and run it locally. The agent can answer questions, call an API, or process a document. It feels like the hard part is done.

Then production requirements arrive.

The agent must run on a schedule. It must process webhooks. It must use secrets safely. It must handle retries. It must not block a request for several minutes. It must expose tools as secure endpoints. It must keep logs so you can debug failures. It must run outside your laptop.

That is when many AI agent prototypes break.

Prototype architecture is usually too linear

A prototype often looks like this:

request → model call → tool call → another model call → response

That is fine for a demo. But real agent workloads are rarely that clean.

A production agent may need to:

- call multiple APIs;

- wait for slow external services;

- process large files;

- retry failed steps;

- store intermediate results;

- run asynchronously;

- notify a user later;

- keep sensitive keys out of the client;

- expose a stable endpoint for tools or webhooks.

Once these requirements appear, a single request/response function becomes fragile.

Problem 1: timeouts

AI workloads are unpredictable. A model call may return quickly or slowly. A tool may depend on a third-party API. A document-processing job may take seconds or minutes. A web scraper may be delayed by network conditions.

Traditional request handlers are not always a good fit for this kind of work. If the platform has strict execution limits, the agent may fail in the middle of a workflow.

The better pattern is to separate the immediate API response from the long-running work:

API request → validate → start background job → return job ID

background job → run agent workflow → store result → notify user

This makes the system more reliable and easier to observe.

Problem 2: tool security

Agent tools are powerful. A tool might create an invoice, send an email, update a database, or call an internal API.

The model should not have unrestricted access to those operations. Each tool should be a backend function with clear boundaries.

A good tool function validates:

- who is calling it;

- what operation is requested;

- whether required fields exist;

- whether the action is allowed;

- how much data should be returned to the model.

Without that boundary, an agent can become a security problem instead of a productivity tool.

Problem 3: secrets management

AI agents often use many external services: model providers, CRMs, billing APIs, notification tools, databases, search indexes, and internal systems.

Those credentials must not live in frontend code or prompts. They need environment variables or a secrets layer. They also need to be scoped carefully. A summarization tool should not automatically have access to billing credentials.

In production, secrets are not a convenience feature. They are part of the agent’s safety model.

Problem 4: observability

When an agent gives a bad answer, you need to know why.

Was the prompt wrong? Did retrieval return bad context? Did a tool fail? Did an external API return an empty response? Did the agent skip a step? Did a retry duplicate an action?

Logs are useful, but multi-step AI workflows often need more structure:

step 1: validate input

step 2: retrieve context

step 3: call model

step 4: call tool

step 5: store result

step 6: notify user

If each step has logs, duration, input metadata, and status, debugging becomes possible.

Problem 5: scheduled agents

Many useful agents are not triggered by chat. They run automatically.

Examples:

- check competitor pages every morning;

- summarize GitHub issues every hour;

- monitor failed payments;

- scan new leads and enrich them;

- generate weekly SEO reports;

- sync data between two systems.

This means agent infrastructure needs scheduling. A platform that only handles incoming HTTP requests is not enough.

Problem 6: webhooks

Webhooks are another common trigger for agents. A new Stripe event, GitHub issue, Slack command, or CRM update can start an AI workflow.

Webhook systems usually expect fast acknowledgement. That means the handler should verify the event, store it, return quickly, and continue work asynchronously.

A webhook-driven agent should not hold the provider request open while it runs a long LLM pipeline.

A better production pattern

A more reliable architecture looks like this:

API Gateway / webhook route

→ validate event

→ start job or pipeline

→ run AI/tool steps

→ store result

→ expose status endpoint or send notification

→ keep logs and traces

This separates public entry points from long-running processing. It also gives you better debugging and safer retries.

Where Inquir Compute fits

Inquir Compute is designed around the backend primitives that agent workloads need:

- API routes for tools and webhooks;

- scheduled jobs for recurring agents;

- background execution for slow workflows;

- isolated containers for functions;

- environment variables for credentials;

- logs and traces for debugging;

- browser-based deployment for fast iteration.

The goal is not to replace the model framework. You can still use LangChain, custom SDK calls, or plain HTTP requests. The goal is to give the agent a reliable runtime around those calls.

When simple serverless is enough

If your agent only handles quick requests and does not need schedules, webhooks, long-running work, or private tools, a simple function platform may be enough.

But if the agent becomes part of your backend, you need more than a function. You need routes, jobs, logs, secrets, schedules, and isolation.

Conclusion

AI agent prototypes fail in production when they are treated as prompts instead of systems.

The model is only one part. The rest is backend engineering: secure tools, background jobs, schedules, observability, retries, and deployment.

Serverless can be a strong fit for AI agents, but only if the platform supports the real workload around the model.