Why AI Agents Need a Real Backend

AI agents usually start as a prompt, a model call, and a few tool definitions. That is enough for a demo. It is not enough for production.

The moment an agent needs to interact with real systems, the problem stops being only about prompts. You need a backend: authenticated HTTP routes, secrets, schedules, logs, retries, background jobs, and a safe place to run code.

This is where many AI prototypes break. The model works. The tool call works locally. The demo looks good. Then the agent needs to send emails, enrich leads, summarize documents, update records, call external APIs, or run every hour. Suddenly, you are not just building an AI agent. You are building infrastructure around it.

A prompt is not a system

A prompt can describe what an agent should do. A backend defines what the agent is allowed to do.

For example, an agent may need tools like:

/search-customer

/create-invoice

/check-inventory

/summarize-ticket

/send-notification

Each of those tools needs real implementation behind it. The agent should not have direct, unlimited access to your database, billing provider, or internal APIs. Instead, every tool should be a small, controlled backend function with validation, authentication, logging, and clear input/output contracts.

That backend layer is what turns an agent from a clever prompt into a reliable application component.

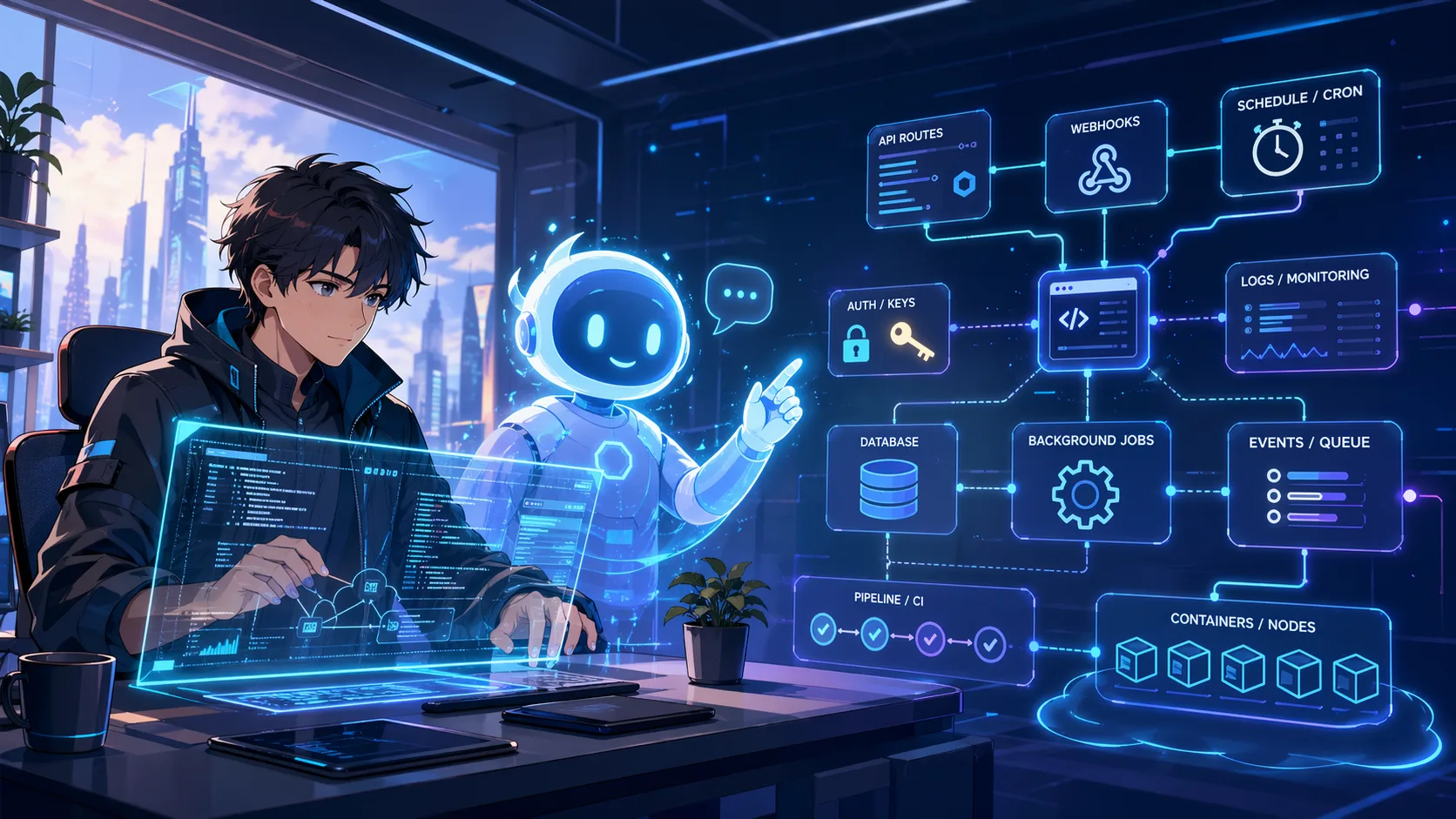

What production AI agents actually need

A production AI agent backend usually needs several primitives.

First, it needs HTTP routes. Most agent tools are easiest to expose as endpoints. The agent sends structured JSON, the backend validates it, performs the action, and returns structured JSON.

Second, it needs secrets. API keys for OpenAI, Anthropic, Stripe, Slack, GitHub, CRMs, internal services, and databases should not live in the prompt or frontend. They belong in environment variables or a secrets layer.

Third, it needs background jobs. Not every tool call should block the user. Some work takes too long: scraping a website, generating a report, processing a file, or running a multi-step enrichment flow. The agent should be able to start a job and return quickly.

Fourth, it needs schedules. Many useful agents are not chat-driven. They run every hour, every night, or every Monday. Monitoring competitors, checking broken pages, summarizing new issues, or syncing external data are scheduled tasks.

Fifth, it needs observability. When an agent fails, you need to know which step failed, what input it received, which external API returned an error, and whether the failure is safe to retry.

The common mistake: putting everything in one function

A common early pattern is one large handler:

receive request → call model → call APIs → write database → send notification → return response

This works until one part becomes slow or unreliable. Maybe the model response is delayed. Maybe an external API times out. Maybe a webhook provider expects a fast response. Maybe the process takes longer than your hosting provider allows.

A better architecture is to split the system into smaller units:

HTTP route → validate input → start job → run agent steps → log result → notify user

This gives you better control. You can retry one step. You can inspect logs. You can run long work asynchronously. You can make dangerous tools require extra validation.

Agent tools should be boring backend functions

There is a lot of excitement around agents, but the tool layer should be boring. That is a good thing.

A tool function should:

- accept a small JSON payload;

- validate required fields;

- check authentication;

- call exactly the systems it is allowed to call;

- return predictable JSON;

- write logs that help debugging;

- avoid exposing secrets to the model.

For example:

{

"customerId": "cus_123",

"action": "summarize_recent_activity"

}

The backend decides whether the request is valid, which APIs to call, and what result to return. The model should not be responsible for security boundaries.

Where Inquir Compute fits

Inquir Compute is a good fit for this layer because it is not only a place to run a single function. It combines several primitives that AI agent backends usually need together:

- serverless functions;

- API routes;

- scheduled jobs;

- background execution;

- environment variables and secrets;

- logs and traces;

- isolated container runtime;

- browser-based deployment.

That matters because AI agents often need a mix of routes, cron jobs, and long-running work. If you split those across too many services, the agent backend becomes harder to reason about than the agent itself.

Example architecture

A simple AI support agent backend could look like this:

/support-agent/message

Receives a user message and starts a job

/jobs/classify-ticket

Calls an LLM to classify urgency and topic

/jobs/enrich-customer

Fetches customer data from CRM

/jobs/draft-response

Generates a suggested answer

/jobs/notify-team

Sends Slack notification when human review is needed

The important point is that the AI part is only one part of the system. The backend controls flow, tools, safety, retries, and logging.

When you do not need a backend like this

You may not need this architecture if your agent is only a local prototype, a single-user script, or a simple chatbot that does not call external systems. For those cases, a notebook or a minimal app is enough.

But once your agent touches production data, runs on a schedule, calls private APIs, processes files, or needs execution history, it needs a real backend.

Conclusion

AI agents do not become useful just because they can reason. They become useful when they can safely act.

That requires backend infrastructure: routes, tools, secrets, jobs, schedules, and observability. The best agent architecture treats the model as the reasoning layer and the backend as the control layer.

If you are building agents that need to do real work, start by designing the backend around them. The prompt is only the beginning.